Poisoning Attacks on Multi-Agent Reinforcement Learning Systems

Chanyeok Choi1*, Jaehwan Cho1, Youngmoon Lee1

1 Hanyang University

Humanoids 2025 · Late-Breaking Report

TL;DR

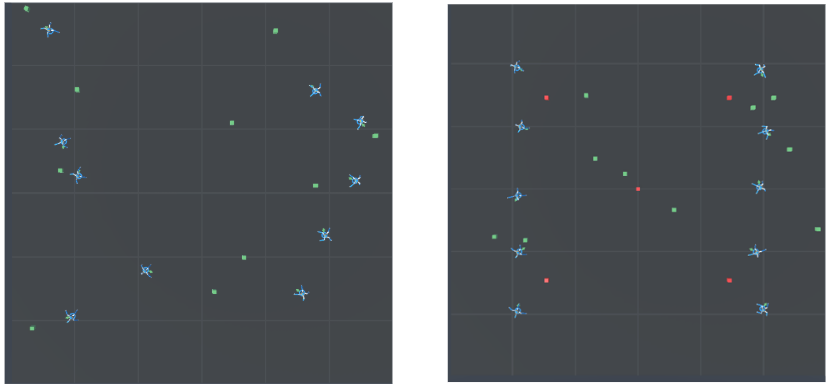

A reward-poisoning attacker agent, trained jointly inside a multi-agent RL system, can scatter high-reward lure points that drag a converged cooperative policy off-trajectory — without ever touching weights or other agents’ observations. On a Unity 50×50 m crawler/lure benchmark, the same attack drops cumulative reward by 18.7% (PPO) and 20.9% (SAC) in the multi-agent setting; in the single-agent setting SAC collapses entirely (from 1276 → 23.93). The asymmetry has a structural cause: PPO’s on-policy clipping locks the policy onto whichever lure it samples first, while SAC’s off-policy + maximum-entropy replay dilutes poison samples — except when the buffer is too small to outvote a persistent attacker.

Threat model

Two agent classes coexist in one environment with predefined, fixed reward rules:

| Agent | Goal | Reward structure |

|---|---|---|

| Crawler | Reach the green target, maximize cumulative reward. | +100 on touching a lure (the trap); −1 on removing a lure. |

| Attacker | Maximize crawler distraction. | +1 each time a crawler is lured. |

The attacker has no access to crawler weights, no privileged sensors, no offline corruption of training data. It interacts only through the environment, by placing lure points — the same channel any other agent uses. This is what makes the attack realistic: any adversary that can participate in a shared MARL environment can poison it.

The attacker’s playbook has three components:

- Reward manipulation — inject premature rewards before the true goal, subtly altering reward timing so monitoring systems see plausible learning curves.

- Random behavior — relocate the goal object to unreachable positions to break determinism and starve the crawler of stable signal.

- Tempting-reward (lure) attack — sprinkle artificially high-reward points along plausible paths, exploiting reward-addiction dynamics to collapse exploration onto the trap.

Why PPO and SAC respond differently

The paper’s headline result is that PPO is structurally more vulnerable to lure-style poisoning in multi-agent settings, despite SAC showing a marginally larger absolute drop in the multi-agent column. The reason is mechanical:

- PPO is on-policy with a clipped surrogate objective. Once a lure perturbs the rollout distribution, the next batch over-samples the lure region; the clip then constrains policy updates around the current (lure-biased) policy. The result is self-reinforcing trap capture — the policy can’t take a large enough step to escape the basin.

- SAC is off-policy with maximum-entropy regularization. The replay buffer dilutes poisoned samples across thousands of clean transitions, and the entropy term forces continued exploration around any apparent optimum. Lure capture requires the attacker to flood the buffer faster than it cycles.

The single-agent SAC collapse (1276 → 23.93) is the exception that proves the rule: with one crawler and one attacker, the buffer fills slowly enough that even a small number of lure samples become the dominant signal.

Results

Cumulative reward at 1M training steps. “Drop” is the crawler’s relative reward loss; the attacker column shows the attacker’s reward under the same attack run.

| Scenario | Crawler (baseline) | Crawler (attack) | Drop | Attacker (attack) |

|---|---|---|---|---|

| Multi-Agent PPO | 528.4 | 429.4 | −18.7% | −2.903 |

| Multi-Agent SAC | 971.3 | 769.9 | −20.9% | −2.449 |

| Single-Agent PPO | 647.5 | 302.5 | −53.3% | +1 |

| Single-Agent SAC | 1276 | 23.93 | −98.1% | −31.43 |

What this means for deployed MARL

Cooperative MARL is at the core of human-interactive robotics — humanoid teams, robot taxis, drone fleets. All of them share the same exposed surface: a reward signal that comes from the environment, not from a trusted oracle. This work shows that an attacker who can act in the environment — not steal weights, not corrupt logs, just participate — is sufficient to degrade learning by 18–98% depending on algorithm and setting.

Two practical takeaways:

- PPO needs a clip-budget defense. On-policy clipping is a feature, but it’s also what locks the policy into a poisoned basin. Detecting trap stickiness (e.g., monitoring KL between lure-region and global policy) is a near-term defense.

- SAC’s robustness is buffer-size dependent. Multi-agent SAC’s resilience comes from sample dilution; tune replay sizes against expected attacker throughput, or the single-agent collapse mode reappears.

What’s next

- Trap-region detectors — auxiliary monitors that flag clip-bounded KL collapse around suspicious reward clusters.

- Robust reward estimation — separating per-agent intrinsic reward streams so a single corrupted channel can’t dominate the shared signal.

- Real-robot transfer — extending from the Unity benchmark to physical fleet scenarios (taxi ride-sharing dispatchers, multi-robot warehouses).

BibTeX

@inproceedings{choi2025poisoning,

title = {Poisoning Attacks on Multi-Agent Reinforcement Learning Systems},

author = {Choi, Chanyeok and Cho, Jaehwan and Lee, Youngmoon},

booktitle = {IEEE-RAS International Conference on Humanoid Robots (Humanoids), Late-Breaking Report},

year = {2025},

}